EU AI Act: a practical guide for professional firms 2026

EU AI Act: a practical guide for professional firms 2026

By Ivor Padilla and Gabriel Naranjo, co-founders of Gradion · Published on 10 April 2026 · Last updated: 10 April 2026 · 20 min read

The EU AI Act — Regulation (EU) 2024/1689, known in Spain as the Reglamento europeo de IA and in the general press as the "AI Act" or the "EU AI law" — is the most talked-about piece of digital legislation of the past year. And the most misread in the context of a typical professional services firm. Almost everything you can find on the subject is written for the wrong audience if you run a small or mid-sized law firm, accountancy practice, gestoría or notary's office in Spain: it is aimed at AI manufacturers, large tech companies or organisations with an entire compliance function. Very little is written with you in mind — the partner who already uses ChatGPT, Copilot or some kind of document classifier in day-to-day work and wants to know what, concretely, to do about it.

TL;DR: Regulation (EU) 2024/1689 sorts AI systems into four risk levels — unacceptable, high, limited and minimal — and separates providers (who build the AI) from deployers (who use it). Your firm is almost always a deployer, not a provider, and most of your systems sit in limited or minimal risk. Key dates: 2 Feb 2025 (prohibited practices), 2 Aug 2025 (general-purpose AI models and penalties), 2 Aug 2026 (general application), 2 Aug 2027 (high-risk via Annex I safety components). Fines go up to EUR 35 million or 7% of worldwide turnover for the prohibited practices. If you already comply with the GDPR and apply human oversight, what's left is two or three things — not twenty.

What Regulation (EU) 2024/1689 is, and why it matters even if you don't "build" AI

Regulation (EU) 2024/1689 is the first European — and, to our reading, the first worldwide — text that regulates AI systems horizontally. It does not regulate the data that flows through those systems: that remains the GDPR's job. It regulates the systems themselves, their design, their classification by risk, the obligations of those who build them and those who use them, and the sanction regime attached to failure.

You need to hold those two frames apart from the start to avoid confusion. When a client submits a query with personal information through a chatbot on your firm's website, two regimes apply simultaneously. Data protection — lawful basis, purpose, retention, data-subject rights, 72-hour breach notification — stays with the GDPR and, in Spain, with the AEPD; if you want the full map of those obligations, our pillar post on data protection in professional firms lays them out in order. The behaviour of the AI system — what it can do, what it cannot, how it must be overseen, what information it must give the user — is governed by the EU AI Act and, in the Spanish allocation of competences, by the newly created AESIA. We go through the data angle in detail in our post on the five GDPR principles applied to AI automation in professional firms; this one is the other side of the coin.

The first thing to internalise: the Regulation is not written for you as an occasional end user. It is written primarily for providers — the companies that develop the systems and put them on the market — and for deployers, which is what the Regulation calls those who use them in the context of a professional activity. That distinction is what matters most in the pages that follow.

Provider or deployer: which side are you on

Article 3 of Regulation (EU) 2024/1689 draws a line that most general-press coverage skips over: provider vs. deployer. A provider is the one who develops an AI system and puts it on the market under their own name — OpenAI, Anthropic, Mistral, Microsoft when it packages Copilot. A deployer is the one who uses that system under their own authority in the course of a professional activity — your firm. Nearly every small or mid-sized professional services firm in Spain is a deployer, not a provider, and that distinction changes which obligations in the Regulation actually apply to you.

Why this matters: the bulk of the Regulation — training-data requirements, risk management, technical documentation, conformity assessment, post-market monitoring, CE marking — is aimed at the provider. The deployer inherits a much smaller subset and, in most cases, a far more operational one: using the system as the provider instructs, training the team, applying human oversight and, where relevant, meeting specific transparency obligations. That subset is what we will unpack below.

Two practical caveats:

- If you configure, adjust or re-label a system to the point of putting it back on the market under your own name — for instance, commissioning a third party to build "your custom legal chatbot" and marketing it under your firm's brand — you may become a provider for AI Act purposes. The requirements shift dramatically. In our experience, it is rare for a firm to cross that line; but it is worth knowing before you sign anything with an integrator.

- Purely personal, non-professional use is outside the Regulation. If a partner at the firm uses ChatGPT at home to write a birthday message, that is not in scope. What is in scope is any use in the context of the firm's professional activity.

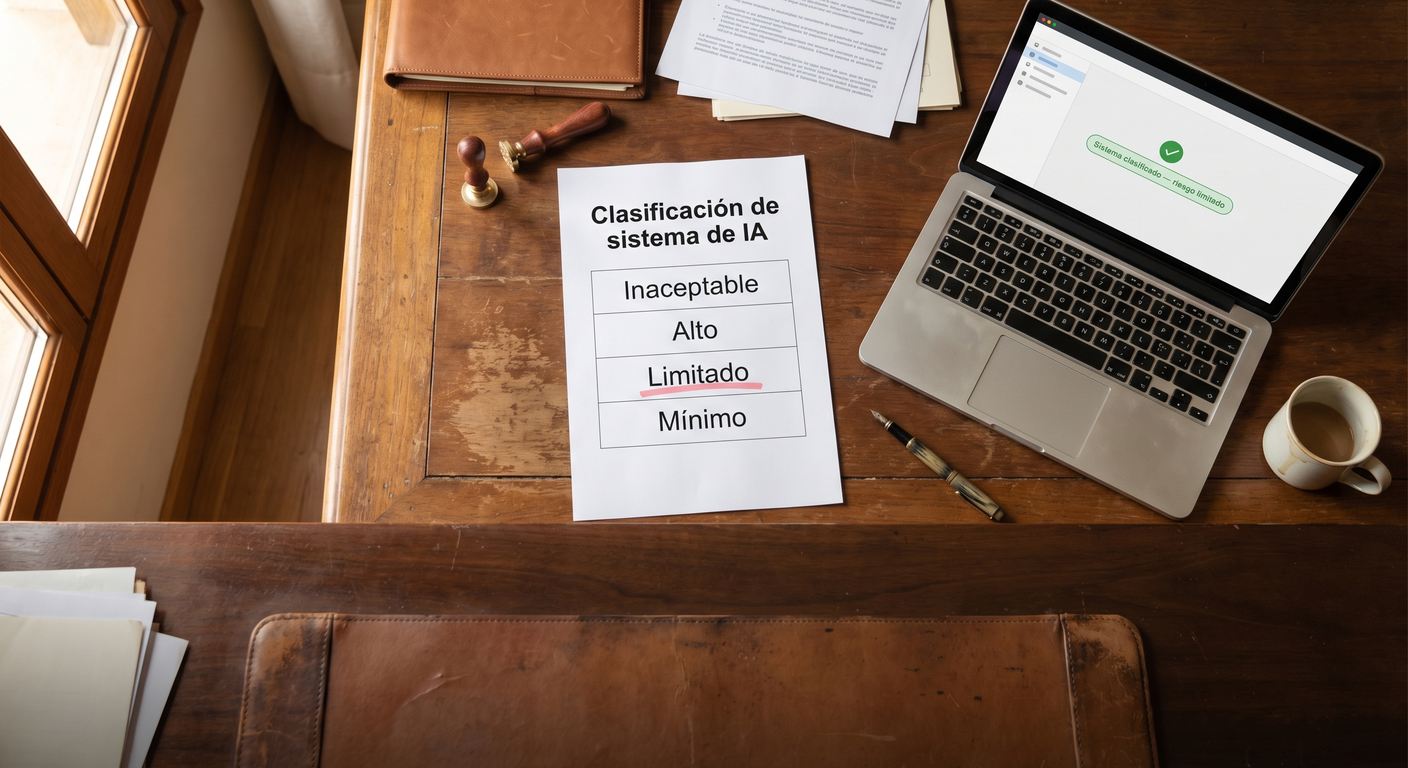

The four risk categories (and which one is yours)

The Regulation classifies AI systems into four risk categories: unacceptable, high, limited and minimal. Obligations rise as risk rises. Classification is the first operational decision for any firm — get it right and the rest of compliance falls into place on its own.

1. Unacceptable risk (Article 5) — prohibited

At the top of the pyramid is unacceptable risk. Article 5 of the Regulation lists the practices that are outright banned: subliminal or deliberately manipulative techniques that impair informed decision-making, exploitation of vulnerabilities linked to age, disability or socio-economic situation, social scoring by public authorities, real-time remote biometric identification in publicly accessible spaces for law-enforcement purposes (with narrowly defined exceptions), emotion recognition in the workplace and in education, and biometric categorisation by sensitive traits, among others. None of the prohibited practices applies to a mid-sized firm using AI to draft briefs, classify documents or assist clients — but it is worth knowing them so that your dossier for each system explicitly rules them out.

In plain terms: the only realistic way for a firm to drift into this category would be to introduce an emotion-recognition system in interviews with job candidates during an internal hiring process. If a team member suggests installing such a tool, the answer is no — and not for compliance reasons but because the Regulation directly bans it.

2. High risk (Article 6 and Annex III) — full obligations

Article 6 of the Regulation sets two routes for classifying a system as high-risk: (a) systems used as safety components of products covered by the EU harmonisation legislation listed in Annex I (toys, lifts, medical devices, vehicles and so on) that require third-party conformity assessment; and (b) systems listed in Annex III, across eight specific areas we set out below. Most of the AI systems a typical firm uses fit neither route and, by default, are not high-risk.

Annex III of the Regulation lists the eight specific areas where an AI system counts as high-risk: biometric data, critical infrastructure, education and vocational training, employment and worker management, access to essential public and private services (social security, credit, insurance), law enforcement, migration and border control, and administration of justice and democratic processes. Point 8 brushes against the world of private practice: it covers systems that assist a judicial authority in researching and interpreting facts and law, or in applying the law to a concrete set of facts. A private firm is not a judicial authority, so a drafting assistant you use internally does not sit here; a tool sold to a court would.

In practice, the only realistic route by which a conventional firm might end up using a high-risk system is if a provider offers one from Annex III — for example, an automated candidate-filtering system for the firm's own hiring process (point 4 of the Annex: employment and worker management). That system would fall under high risk and would pull in the obligations of Article 26 (below). So far, across the pilots we have run with firms in Spain, we have not encountered one.

3. Limited risk (Article 50) — transparency

This is where most of the systems a firm actually uses sit: customer-support chatbots on the website, draft generators, transcription or summarisation tools, conversational assistants that interact directly with a client. The main obligation is transparency: telling the user they are interacting with AI when this is not obvious from context, and marking any synthetic content as artificially generated where relevant. The detail in Article 50 is unpacked in the obligations section below.

4. Minimal risk — no Regulation-specific obligations

The last category — residual: anything that does not fall into the other three — has no Regulation-specific obligations beyond the general ones in Article 4 (literacy), which apply in all cases. This category covers: OCR on incoming invoices, internal document classifiers, spell-checkers, autocomplete, spam filters and similar tools. Most automated work inside a firm sits here. That does not mean you should ignore it — it means you do not have to comply with anything specific under the AI Act to run it, beyond training your team and applying the normal professional due diligence.

A caveat: classification does not live in the software or in the provider. You, as deployer, have to make it in your specific context. A single underlying language model (say, OpenAI's GPT-4 or Anthropic's Claude) can be "limited risk" in a customer-support chatbot and "high risk" if you plug it into an automated exam-grading system. It is not the technology; it is the use case.

The staggered timetable: the dates to put in the diary

Article 113 of the Regulation sets entry into force at twenty days after publication in the EU Official Journal and a staggered application worth setting out in a single table. As of the date of publication of this article the official timetable is: 2 February 2025 — Chapters I (general provisions and Article 4 on AI literacy) and II (the Article 5 prohibited practices) become applicable; 2 August 2025 — Chapter III Section 4 (notifying and notified bodies), Chapter V (general-purpose AI models), Chapter VII (governance), Chapter XII (penalties, including Article 99) and Article 78 (confidentiality) become applicable; 2 August 2026 — the Regulation applies in general; 2 August 2027 — Article 6(1) and the corresponding obligations (high-risk via Annex I safety components) become applicable.

| Date | What comes into force | What it means for a firm |

|---|---|---|

| 2 Feb 2025 | Chapters I and II — prohibited practices (Art. 5) and literacy (Art. 4) | Check that no system falls into the Article 5 list. Begin internal team training. |

| 2 Aug 2025 | GPAI (Ch. V), governance (Ch. VII), penalties (Ch. XII, Art. 99) | Article 99 fines can be imposed from this date. ChatGPT-style systems are subject to general-purpose-model obligations, which you inherit indirectly as a user. |

| 2 Aug 2026 | General application of the Regulation | Full transparency obligations (Art. 50) and, where relevant, the Article 26 obligations (high-risk Annex III). |

| 2 Aug 2027 | Article 6(1) and associated obligations | High-risk via Annex I safety components — almost no firm will be affected. |

Two things that matter more than the dates themselves:

- The Article 4 literacy obligation has been enforceable since February 2025. It is the one cross-cutting obligation that applies to you today regardless of the risk level of your systems. If that date has passed and you have nothing written down about team training, that is the first gap to close.

- Sanctions are not retroactive but they are real. From 2 August 2025 onwards, Article 99 can be applied. The fact that you deployed a system before that date does not exempt you: non-compliance is assessed at the moment of inspection.

The obligations that apply to you as a deployer

Here is the practical core of the post. What a firm using AI actually has to do, in order of how likely they are to apply.

Article 4 — AI literacy

Article 4 of the Regulation introduces an obligation that gets relatively little airtime in the general press but is the most immediately relevant to your firm: providers and deployers must take measures to ensure a sufficient level of AI literacy among their staff and anyone operating the systems on their behalf. The level is assessed against technical knowledge, experience, training and the context of use. In practical terms: "we use ChatGPT" is not enough — you need to be able to show that the team has had baseline training on what they can feed into the tool, what they cannot, and how to validate its output.

What counts as enough: a documented training session when the system is rolled out, an internal reference document with the usage policy, and a couple of worked examples run with the team. External certification is not required. Documentary evidence that the training has happened is.

Article 50 — transparency towards the user

Article 50 of the Regulation is the article most likely to touch your day-to-day. It places three transparency obligations on providers and deployers of certain systems: (a) inform the natural person that they are interacting with an AI system when this is not obvious from the context — for example, a customer-support chatbot on the firm's website; (b) mark as artificially generated or manipulated any synthetic content (text, image, audio, video); (c) inform affected individuals when emotion-recognition or biometric-categorisation systems are used (unlikely in a typical firm). Paragraph 2 clarifies that the content-marking obligation does not apply where AI performs a standard editing-support function that does not materially alter the user's input — spellcheck does nothing to you, a text generator does.

Article 14 — human oversight (a cross-cutting principle)

Article 14 of the Regulation requires high-risk AI systems to be designed and developed so that they can be effectively overseen by natural persons while in use. Paragraph 2 states the goal: prevent or minimise risks to health, safety or fundamental rights. Although this article formally applies only to high-risk systems, the principle it establishes — "the AI drafts, the person decides" — is the same rule we apply to limited-risk and minimal-risk systems in a practice: a filing never goes out with a signature without a professional having reviewed it.

Article 26 — specific obligations if you reach high risk

If for some reason your firm does end up using a system classified as high-risk, Article 26 of the Regulation applies to you as a deployer. In short it requires you to: use the system in accordance with the provider's instructions (paragraph 1), assign human oversight to people with the necessary competence, training and authority (paragraph 2), ensure input data is relevant and sufficiently representative (paragraph 4), and monitor the system's operation and report incidents to the provider (paragraph 5). For most firms this article is reference material they will never need; for the rare firm working with an Annex III tool, it becomes the operational playbook.

Internal usage register

This is not a literal article, but it is the logical consequence of the four obligations above. The internal register should contain, for each AI system the firm uses: which system it is and who the provider is, for which use case, on which data, who supervises it, what risk category you have assigned and why, and when it was last updated. It is not a bureaucratic document; it is the foundation of any response to AESIA or AEPD if they ever ask.

How AEPD, AESIA and the AI Act fit together

The most common confusion when a firm starts looking into the Regulation is how the authorities share competence. One sentence: AEPD still rules on personal data, AESIA rules on the AI system itself, and the two coexist. In practice the boundary is not always clean and the same file can touch both, but the general principle holds.

Royal Decree 729/2023 of 22 August approved the Statute of the Spanish Agency for the Supervision of Artificial Intelligence (AESIA), created under the seventh additional provision of Law 28/2022 on fostering the ecosystem of start-ups and attached to the Ministry of Economic Affairs and Digital Transformation via the Secretariat of State for Digitalisation and Artificial Intelligence. Its seat is in A Coruña — the "La Terraza" building on Jardines de Méndez Núñez — through a transfer of assets from the city council. It is one of the first national agencies set up in the EU specifically to supervise the AI Act; Spain moved ahead of most Member States by launching it even before the Regulation was finally approved.

The Statute approved by RD 729/2023 defines the purpose of AESIA as minimising the risks that AI can pose and supporting the appropriate development and deployment of AI systems. At state level, the Agency acts as the authority responsible for the supervision and, where applicable, sanction of AI systems, with the explicit goal of eliminating or reducing risks to personal integrity, privacy, equal treatment, non-discrimination and other fundamental rights. Translated: AESIA is the Spanish authority that can fine you for non-compliance with the AI Act — but not for the personal-data side, which remains with AEPD.

The overlap with the GDPR matters and often raises questions. Everything to do with the personal data of your client that feeds into the system — lawful basis, minimisation, transparency to the data subject, time limits on data-subject-right responses, 72-hour breach notification — remains under the GDPR and, in Spain, under the AEPD. We cover that territory in detail in what the AEPD expects when you automate with AI in your firm. What the AI Act adds is the layer of the system itself: how it is designed, how it is classified by risk, how it is supervised, how the user is told they are interacting with AI. The usual scenario is that the same automation can generate one file at the AEPD for a data breach and, in parallel, another at AESIA for an AI Act breach. They do not add up automatically, but they can coexist.

Article 22 of the GDPR gives data subjects the right not to be subject to a decision based solely on automated processing — including profiling — that produces legal effects concerning them or similarly significantly affects them, with narrowly defined exceptions. Operational overlap with the AI Act: where GDPR Article 22 requires you to offer human intervention to the affected person, AI Act Article 14 requires you to design the system so that intervention is actually effective. They are complementary, not redundant.

Sanctions: up to EUR 35 million or 7% of worldwide turnover

Article 99 of the Regulation structures the administrative fines in three tiers by severity. Top tier: up to EUR 35 000 000 or 7 % of total worldwide annual turnover in the preceding financial year — whichever is higher — for breaches of the prohibited practices in Article 5 (paragraph 3). Middle tier: up to EUR 15 000 000 or 3 % for non-compliance with the obligations applicable to providers, importers, distributors and deployers (paragraph 4). Lower tier: up to EUR 7 500 000 or 1 % for supplying incorrect, incomplete or misleading information to the competent authorities (paragraph 5). Paragraph 6 clarifies that for SMEs and start-ups each fine is set at the lower of the percentage or the absolute amount — not the higher. The comparison with GDPR is immediate: GDPR's Article 83 set tiers at EUR 10 M / 2 % and EUR 20 M / 4 %; the AI Act goes above that.

For context: Article 83 of the GDPR caps fines at EUR 10 M or 2 % of worldwide turnover (lower tier) and EUR 20 M or 4 % (upper tier). AI Act Article 99 rises to EUR 35 M or 7 % for prohibited practices. They are not additive: when the same conduct breaches both regimes, non bis in idem is resolved case by case, but the ceiling an operator faces effectively shifts to the AI Act.

A contextual note that few commentaries mention: the Article 99 numbers are written for large operators. The probability that a ten-person firm in Madrid receives a EUR 35 million fine is low. The probability of getting a middle-tier fine for failing to document human oversight or team literacy, on the other hand, is not negligible — especially once AESIA starts inspecting systematically. The real cost of non-compliance is not at the top of the range; it is at the floor, where everyday fines land.

Frequently asked questions about the EU AI Act

What exactly is the EU AI Act?

It is Regulation (EU) 2024/1689 of the European Parliament and of the Council, commonly known as the "AI Act". It is the first European text that horizontally regulates artificial intelligence systems: it sorts them by risk, sets different obligations for providers and for deployers, and establishes a sanction regime. Published in the EU Official Journal in July 2024, it entered into force twenty days later and its application is staggered between 2025 and 2027.

Does it apply to my firm if I only use ChatGPT and Copilot?

Yes, but only the deployer obligations — primarily Article 4 (literacy) and, where there is a client-facing chatbot, Article 50 (transparency). The bulk of the technical requirements in the Regulation are carried by the provider (OpenAI, Microsoft); you inherit a smaller, operational, documentary subset.

Am I a "provider" or a "deployer" under the Regulation?

In 99% of cases, a deployer. You only become a provider if you build or customise a system to the point of reintroducing it to the market under your own name, which brings much heavier obligations.

Is my invoice OCR system high-risk?

In practice, no. Incoming invoice OCR is an internal document classifier that fits none of the eight Annex III areas and is not a safety component of any Annex I product. It is classified as minimal risk, which means it has no AI-Act-specific obligations beyond the Article 4 literacy requirement.

How does the AI Act relate to the GDPR?

They are complementary, not alternatives. The GDPR governs the personal data flowing into the system (lawful basis, minimisation, data-subject rights, breach notification). The AI Act governs the system itself (design, risk classification, human oversight, transparency). The same automation running on client data usually has both regimes on top of it at the same time.

What is the difference between AEPD and AESIA?

The AEPD is Spain's data protection authority; it supervises and fines breaches of the GDPR and Spain's LOPD-GDD. AESIA, created by Royal Decree 729/2023 and headquartered in A Coruña, is Spain's AI Act supervisory authority; its purpose is to minimise the risks from the use of AI and it carries out supervision and, where appropriate, sanction of AI systems. In practice the same firm can end up dealing with both.

When does the Regulation actually apply to me?

Some parts already apply (prohibited practices and literacy since 2 February 2025; Article 99 penalties since 2 August 2025). General application is from 2 August 2026. The high-risk obligations via Annex I safety components start on 2 August 2027. If you are reading this in 2026, the dates that matter most have already passed and the next one is the general application in August.

How much can I be fined?

It depends on the Article 99 tier. Up to EUR 35 M or 7% of worldwide turnover for Article 5 prohibited practices; up to EUR 15 M or 3% for non-compliance with the obligations applicable to providers and deployers; up to EUR 7.5 M or 1% for supplying incorrect information. For SMEs, the lower figure applies, not the higher.

How we handle this at Gradion

Across the firms we have worked with so far, the real problem with the EU AI Act is not the size of the text but the order in which it is approached. Most firms begin by trying to "comply with the AI Act" as if it were one monolithic thing — read the 113 articles, take notes, draft a quarterly plan, ask the digital-law specialist for help. That approach fails because it mixes obligations that do not apply to them with those that do, and because it ends up producing generic documentation that would not survive twenty minutes of conversation with an inspector.

Our observation: risk classification comes before compliance. Every ten-day pilot we deliver begins with a system classification sheet — which of the four Regulation categories the system falls into, with the concrete reasoning: this system processes incoming invoices, does not interact with the client, does not take decisions with legal effect, does not fit any point of Annex III, so it is minimal risk — and the list of obligations that actually apply. That sheet becomes part of the pilot's dossier and is the first document an AESIA inspector would see if they ever looked. Almost none of the systems we have deployed so far have landed in high risk; most sit in limited or minimal risk, and the associated obligations — transparency towards the user where relevant, human oversight by the signing professional, team literacy, an internal usage register — are digestible when you take them on from day one.

When we cross this with the data side under the GDPR and with what the AEPD expects, the result is a system that is ready for both possible inspections — AEPD for the data, AESIA for the system — without duplicating documentation or bloating the project. Gabriel, a Microsoft Certified Trainer in cloud and security, builds the infrastructure and compliance layer while I handle the automation and architecture. Both of us review every classification before a system is declared deployed. No one inherits opaque compliance when the pilot ends.

We do not promise that the AI Act is simple. We do promise that, for a firm that uses AI professionally but does not build it, the part of the Regulation that genuinely needs to be complied with is far smaller than it looks.

Is your team losing 15 hours a week on paperwork?

We solve it in 10 days, with a fixed-price pilot. Your automation reaches production with the AI Act classification already done and documented.

Tell us about your case →